Most board decks fail quietly. They look professional, tell a coherent story, and get polite nods from investors – but underneath, the logic doesn’t hold. The narrative says one thing. The operational reality says another. These gaps are what I call logic leaks, and they’re expensive. By the time they surface as a missed milestone or a stalled fundraise, the damage is already done.

Logic leaks happen because founders move faster than their documentation can keep up. Over time, the story you’re telling diverges from the company you’re actually building. The vision slide promises aggressive market expansion, but the org chart shows a hiring freeze. The financial model forecasts improving margins, but those margins depend on temporary vendor discounts that expire next quarter. You’re selling an engine that your chassis can’t support.

The Signal Audit is a diagnostic approach I developed to catch these structural failures before they become irreversible. It’s not about judging the quality of individual slides – it’s about stress-testing whether the business logic they describe actually holds together.

The 5 Signals Framework

The audit is built on a system I call the 5 Signals. These aren’t performance metrics. They’re structural indicators that reveal whether your startup is internally coherent and externally credible. When the signals are aligned, decisions get easier, execution gets faster, and investors see clarity instead of risk. When they’re misaligned, friction compounds until something breaks.

Here’s what each signal measures:

Signal I: Vision

Do you and your co-founders actually agree on where you’re going? Not just in broad terms, but in the specific decisions that vision implies – who you’re building for, what you’re willing to say no to, how you define success. Weak vision signals show up as inconsistent pitches, roadmap whiplash, and teams that don’t know what they’re optimizing for.

Signal II: Value

Are you solving a problem urgent enough that someone will pay to fix it? This isn’t about features or technology – it’s about whether your solution creates a meaningful outcome for a real person with a real budget. Weak value signals look like high demo interest but low conversion, or users who churn after onboarding because they never felt the pain you thought you were solving.

Signal III: System

Can you prioritize under pressure, or are you just reacting to noise? System is about execution clarity – whether your team knows what matters most right now, whether you have mechanisms to track progress and adapt, whether you can say no to distractions that don’t align with your strategy. Weak system signals look like chronic busyness without momentum.

Signal IV: Market

Are you entering a real, reachable market with a credible wedge, or are you guessing? This isn’t about TAM size – it’s about demand, timing, competitive positioning, and whether you have a specific strategy for gaining traction. Weak market signals show up as broad targeting (”we’re building for SMBs”), vague differentiation, or customers who like your idea but never convert.

Signal V: Momentum

Are you actually moving forward in ways that matter, or just staying busy? Momentum is the external proof of your internal signals – revenue, retention, engagement, and strategic milestones. It’s what investors and customers see. Weak momentum signals look like vanity metrics, one-time spikes that don’t compound, or traction that depends on unsustainable tactics.

These signals are interconnected. A weak vision signal will degrade your system. A confused value signal will undermine your momentum. An unclear market signal will make your traction meaningless. The audit works by checking whether the signals reinforce each other or cancel each other out.

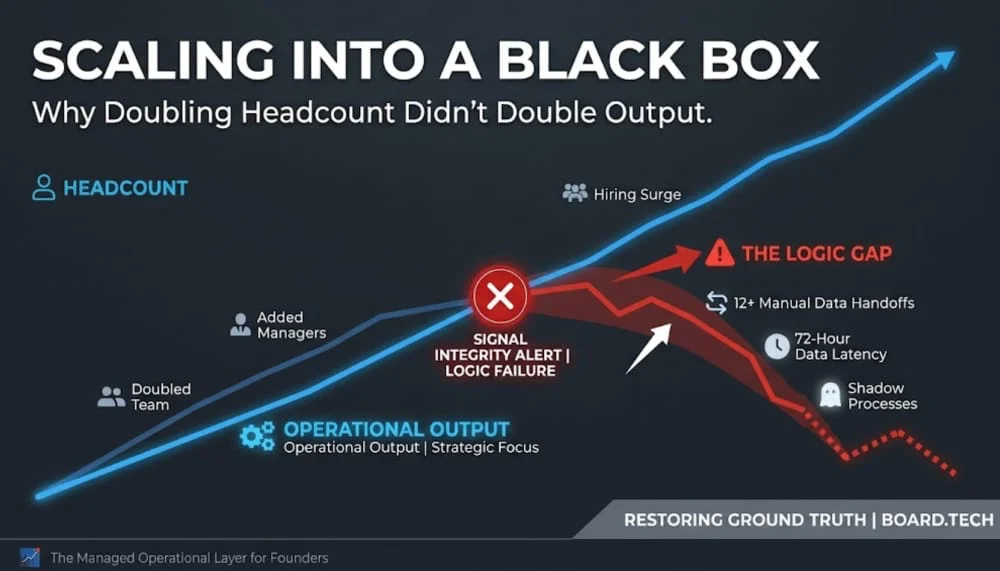

The Anatomy of a Logic Leak

Most logic leaks are signal mismatches – places where one part of your deck contradicts another. You claim your competitive advantage is proprietary technology, but your financial forecast shows 80% of capital going to customer acquisition instead of R&D. You’re betting against your own narrative.

Or you present a bold market-expansion strategy that requires specialized engineering talent, yet your org chart shows a hiring freeze. The strategy and the system are out of sync. On their own, both slides might look fine. Together, they reveal a structural contradiction.

The most dangerous leaks are efficiency mirages – situations where your metrics look good on the surface but depend on temporary conditions that won’t last. Your margins are improving, but only because of vendor discounts that expire in two quarters. Your user growth is strong, but it’s driven by a promotional campaign you can’t afford to sustain. The signal of profitability or traction is actually noise. The long-term integrity of the business is compromised for a short-term story.

Why Forensic Clarity Matters

Your board isn’t just there to support you – they’re there to mitigate risk and govern the company. When you present

a deck with undetected logic leaks, you’re not just presenting a plan. You’re signaling a lack of control over your own operational reality.

This is where the Signal Audit adds value. It provides a second set of eyes that isn’t caught up in the daily fires of the business. By the time a board deck reaches the meeting, it’s been polished to a high gloss. The audit strips that gloss away to check whether the logic underneath is sound.

The goal is to move from unconscious risk – where you don’t know what you don’t know – to informed decision-making. Once a leak is identified, you can patch it. You can adjust the hiring plan, realign the budget, or pivot the narrative to match the data. But you can’t fix what you can’t see.

How the Signal Audit Works

The audit doesn’t evaluate slides in isolation – it looks for coherence across the system. Here are the checks that catch most leaks:

Signal I/II Alignment Check: Does your vision require a type of value delivery that your product or business model can’t support? If you’re positioning as a premium solution but pricing like a commodity, something’s misaligned.

Signal II/V Consistency Check: Does your claimed value proposition match what your momentum metrics actually show? If you say your strength is retention but your growth depends on constant new user acquisition, your value signal is weak.

Signal III/V Linkage Check: Is your operational system capable of producing the momentum you’re showing? If your margins are improving but your team is underwater, or if your growth is accelerating but your hiring is frozen, the system can’t sustain what the momentum suggests.

Signal IV Reality Check: Is your market strategy grounded in evidence or aspiration? If your deck shows a massive TAM but you can’t name your first 100 buyers or your wedge into the market, you’re not building on solid ground.

The audit produces a signal profile – a map of where you’re strong and where you’re leaking. That profile tells you what to fix before your next board meeting, your next fundraise, or your next major decision.

Building Systems to Catch Mistakes Early

The most successful founders aren’t the ones who never make mistakes – they’re the ones who build systems to catch mistakes before they compound. Detecting logic leaks is one of those systems.

It’s not about perfection. It’s about knowing where your story has drifted from the facts, and closing that gap before it costs you a round, a hire, or a year of momentum.

If you can’t see the cracks in your own logic, you’re not looking closely enough. The Signal Audit is how you start looking.