There’s a specific moment of panic that happens when a company tries to replace a messy human process with a precise automated one. It usually starts as an efficiency play – a plan to automate workflows, deploy agents, save time, and reduce overhead. But the moment the team attempts to map the actual logic, they hit a wall. The process they thought existed turns out to be a hallucination, held together by quiet daily improvisations that happen in meetings and Slack threads.

In a manual environment, ambiguity isn’t a bug. It’s how things get done. When two departments have conflicting goals or a workflow lacks clear decision criteria, someone schedules a meeting and negotiates a workaround in real time. The salesperson checks with finance about whether to approve a discount. The product manager asks engineering whether a feature request is feasible before committing to the customer. The operations lead manually routes an edge case because the system doesn’t have logic for handling it. None of this is documented. It just happens, over and over, until it becomes normalized as “how we work.”

Meetings serve as a high-frequency patch for broken logic. They’re not just coordination – they’re manual overrides for a system that doesn’t actually function on its own. Humans are remarkably good at navigating ambiguity. They interpret vague instructions, read between the lines, make judgment calls when rules conflict, and adjust on the fly when reality doesn’t match the documented process. This flexibility is what allows companies to scale past the point where their actual operational design should have collapsed.

But machines can’t do any of that. An AI agent can’t hop on a quick call to clarify a vague instruction. It can’t tell which stakeholder to prioritize when the rules conflict. It can’t make a judgment call based on context that was never written down. It requires explicit logic: if this happens, then do that. If those conditions are met, route here. If they’re not, route there. Every scenario has to be defined. Every exception has to have handling logic. Every decision point has to be mapped.

Attempting to hand these processes to automation reveals the hidden tax of undefined logic. The project starts with confidence – automate the approval workflow, automate the lead routing, automate the customer onboarding sequence. Then the team starts asking basic questions. What exactly triggers the approval? How do we define a qualified lead? What happens if a customer submits incomplete information? And the answers turn out to be: “It depends.” “We usually figure it out in the moment.” “Someone makes a call based on the situation.”

None of that translates into automation. You can’t code “it depends” into a system. You can’t tell an agent to “figure it out in the moment.” Every place where human judgment fills in for missing logic becomes a blocker. The automation project stalls because the process underneath it was never actually a process – it was a series of judgment calls pretending to be a workflow.

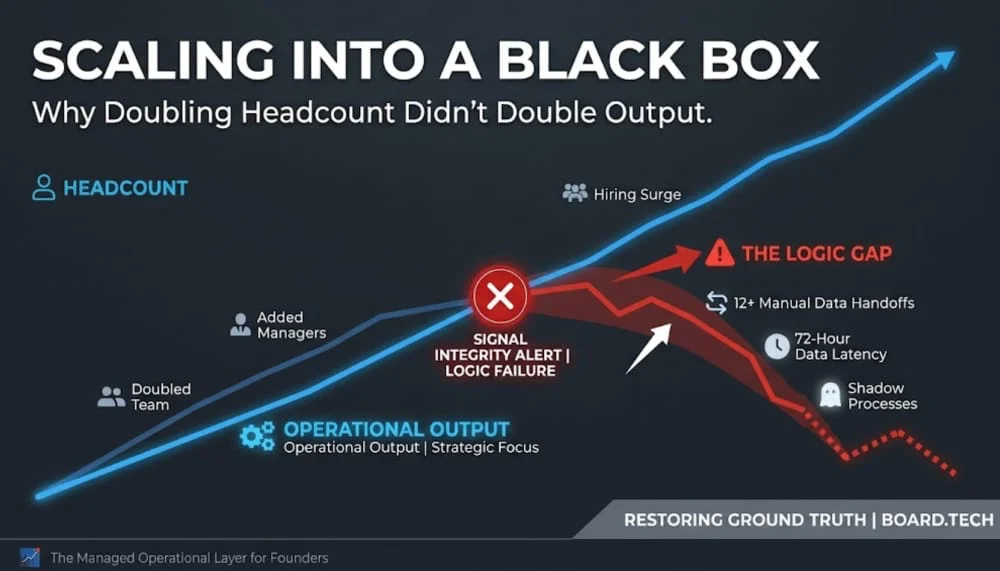

This is why many companies discover their operations don’t make sense only after the automation attempt fails. The failure isn’t technical. The tools work fine. The problem is structural. The business was running on human flexibility, compensating for poor design, and no one realized it until they tried to remove the humans. What looked like operational maturity was actually operational debt being serviced daily through meetings and manual interventions.

This creates a dangerous decision trap for leadership. The pressure to scale suggests you should automate quickly and refine the logic later. Move fast, deploy agents, optimize as you go. But you cannot safely automate a process that hasn’t been structurally validated. If you pour capital and engineering effort into automating a system with broken logic, you’re not creating efficiency – you’re scaling a liability.

The automation will do exactly what you tell it to do, which means it will execute the flawed logic repeatedly and at volume. The pricing tool will apply the wrong discount structure to every deal because the rules were never clearly defined. The routing system will send tickets to the wrong team because the criteria for categorization were always subjective. The onboarding sequence will create confusion because the steps were designed around what felt right to the person doing it manually, not around what actually drives successful adoption.

Now, instead of one person manually creating the problem, the system is creating it automatically for every transaction. And because it’s automated, it happens faster and at larger scale before anyone notices. What used to be a localized issue that someone could catch and fix in the moment becomes a systemic problem that requires stopping the automation, debugging the logic, redesigning the process, and then re-implementing. By the time you realize the automation is broken, you’ve already built workflows, trained teams, and set expectations around it. Rolling back is expensive. Fixing it is expensive. Living with it is expensive.

This is why automation functions as an honesty test for how a business actually works. It forces you to make explicit every decision that was previously implicit. It exposes every place where “we figure it out as we go” was covering for the fact that no one had designed the system properly. It reveals every place where meetings were being used to patch logic that should have been defined at the process level.

The discomfort comes from the recognition that much of what felt like operational sophistication was actually operational chaos being managed through human effort. The company looked like it was functioning smoothly because people were constantly compensating for structural gaps. Remove that compensation layer, and the gaps become immediately visible.

Some companies respond to this by trying to automate around the ambiguity. They add manual review steps to the automated workflow. They build escalation paths so edge cases get routed back to humans. They create override mechanisms so someone can intervene when the automation produces the wrong result. Within a few months, they’ve built a system that’s more complex than the manual process it replaced, and now requires technical expertise to maintain, on top of the operational expertise needed to handle the exceptions.

Other companies realize the automation failure is revealing something more fundamental. The processes don’t work because the underlying decisions about how the business should operate were never made clearly. The logic is ambiguous because no one took the time to define what the rules should actually be. The exceptions are constant because the system was designed around the happy path, and no one thought through what happens when reality doesn’t match the ideal case.

Fixing this requires going back to the beginning and designing the process properly before trying to automate it. That means defining clear decision criteria. Mapping out every scenario that needs to be handled. Specifying what should happen in each case. Identifying where human judgment is actually necessary versus where it’s just covering for missing logic. Building the operational architecture that can function without constant intervention, and only then layering automation on top of it.

This is slower and less exciting than deploying AI agents to “handle everything.” It requires admitting that the company’s operations aren’t as mature as leadership thought. It means pausing automation initiatives to fix foundational problems. It often reveals that the company has been scaling on human effort rather than on sound operational design, and that realization is uncomfortable.

But the alternative is worse. You can automate broken processes and discover the problems only after they’ve been scaled across the entire organization. You can keep adding complexity to compensate for unclear logic until the system becomes unmaintainable. You can continue relying on meetings to patch operational gaps until the coordination overhead becomes the primary thing limiting growth.

Or you can treat automation failures as diagnostic information. When the attempt to automate a process reveals that the logic underneath doesn’t actually work, that’s a valuable signal. It’s telling you where the operational debt is concentrated. It’s showing you which processes were being held together by human improvisation rather than sound design. It’s forcing the question of whether you’re building on a solid foundation or just moving fast on top of structural problems that will eventually become impossible to ignore.

The choice isn’t between manual work and AI. The choice is between slowing down to fix the underlying decision architecture or accelerating toward a collapse that happens faster because you automated it. Automation multiplies whatever operational logic already exists. If that logic is sound, automation creates leverage. If it’s broken, automation creates compounding failure at speed.

Most companies discover which one they have only after they’ve committed to the automation and started seeing the results. By then, the cost of unwinding the decision is much higher than it would have been to validate the logic first.

The hard truth is that if your operations require constant meetings to function, you don’t have operations – you have managed chaos. And managed chaos doesn’t automate. It just becomes automated chaos, which is significantly more expensive to live with and much harder to fix once it’s running at scale.